Superset and Druid - Sergio's Stumbles

The troubleshooting I had to do for the Superset and Druid blog post

This is a companion blog post to the Superset and Druid blog post.

Why add stumbles? The not so happy path is also useful to know.

I find that good blog posts guide you through the happy path, which is incredibly useful and likely helps circumvent common mistakes. But as I built the blog post, I realized that I run into a bunch of issues related mostly to the new combination of components I am trying to test; they are at least new for me. I think that others might find my stumbles just as useful in case they run into them. I’ll try to include the whole and sometimes embarrassing story as companions to the happy path blog posts.

Druid is not listed as a supported database

First struggle: I started with the docker compose path for both Preset and Druid because they were both readily available in the instructions and a recent Q&A session in Apache Druid Forum ran into issues using this config when setting up authentication on the Apache Druid side, so I wanted to walk in that direction and kill two birds with one stone. But Murphy, my lifetime companion, had a few things to say.

The first set of instructions I followed and wrote were:

Install SuperSet

Following instructions from https://superset.apache.org/docs/installation/installing-superset-using-docker-compose

git clone https://github.com/apache/superset.gitI had actually done this in the semi-recent past, and didn’t think to refresh the superset code before I started.

cd superset

# start superset cluster

docker compose -f docker-compose-non-dev.yml pull

docker compose -f docker-compose-non-dev.yml upInstall Druid

Following instructions from https://github.com/apache/druid/tree/master/distribution/docker#run

git clone https://github.com/apache/druid.git

cd druid

# start druid cluster

docker-compose -f distribution/docker/docker-compose.yml upI then read about setting up Druid Connection in Superset and this and realized that I needed to adjust the Superset config, rebuild and relaunch in order to enable Druid integration. I was wrong about this (in the end the docker-compose-non-dev.yml was the way to go.

Following those instructions I stopped Superset cluster on Docker Desktop and tried:

git pull

# add druid to the configuration

touch ./docker/requirements-local.txt

echo "pydruid" >> docker/requirements-local.txt

# rebuild

docker-compose build --force-rm

docker-compose upTurned out to be a very different deployment this way which resulted in other problems.

Postgres pod won’t start

This failed because I was using a different docker-compose file which had changed the version of the postgres image it uses and I had to do some cleanup. The volume created in docker persisted from one attempt to the other and postgres was complaining about the file structures from postgres v 10 not being compatible with v 14. So I removed the volume for all the superset pods and restarted the operation:

docker-compose build --force-rm

docker-compose upBut Superset continued to fail, the UI looks like this:

Two of the containers failed to run:

Here’s the log output for the failed containers:

superset_websocket | config.json file not found

superset_websocket | {"error":{},"level":"error","message":"uncaughtException: Please provide a JWT secret at least 32 bytes long\nError: Please provide a JWT secret at least 32 bytes long\n at Object.<anonymous> (/home/superset-websocket/dist/index.js:71:11)\n at Module._compile (node:internal/modules/cjs/loader:1155:14)\n at Object.Module._extensions..js (node:internal/modules/cjs/loader:1209:10)\n at Module.load (node:internal/modules/cjs/loader:1033:32)\n at Function.Module._load (node:internal/modules/cjs/loader:868:12)\n at Function.executeUserEntryPoint [as runMain] (node:internal/modules/run_main:81:12)\n at node:internal/main/run_main_module:22:47","stack":"Error: Please provide a JWT secret at least 32 bytes long\n at Object.<anonymous> (/home/superset-websocket/dist/index.js:71:11)\n at Module._compile (node:internal/modules/cjs/loader:1155:14)\n at Object.Module._extensions..js (node:internal/modules/cjs/loader:1209:10)\n at Module.load (node:internal/modules/cjs/loader:1033:32)\n at Function.Module._load (node:internal/modules/cjs/loader:868:12)\n at Function.executeUserEntryPoint [as runMain] (node:internal/modules/run_main:81:12)\n at node:internal/main/run_main_module:22:47","exception":true,"date":"Thu Nov 17 2022 00:09:53 GMT+0000 (Coordinated Universal Time)","process":{"pid":18,"uid":1000,"gid":1000,"cwd":"/home/superset-websocket","execPath":"/usr/local/bin/node","version":"v16.18.1","argv":["/usr/local/bin/node","/home/superset-websocket/dist/index.js","start"],"memoryUsage":{"rss":55349248,"heapTotal":18354176,"heapUsed":13019312,"external":1146687,"arrayBuffers":243762}},"os":{"loadavg":[3.77,3.98,2.47],"uptime":8155.06},"trace":[{"column":11,"file":"/home/superset-websocket/dist/index.js","function":null,"line":71,"method":null,"native":false},{"column":14,"file":"node:internal/modules/cjs/loader","function":"Module._compile","line":1155,"method":"_compile","native":false},{"column":10,"file":"node:internal/modules/cjs/loader","function":"Module._extensions..js","line":1209,"method":".js","native":false},{"column":32,"file":"node:internal/modules/cjs/loader","function":"Module.load","line":1033,"method":"load","native":false},{"column":12,"file":"node:internal/modules/cjs/loader","function":"Module._load","line":868,"method":"_load","native":false},{"column":12,"file":"node:internal/modules/run_main","function":"Function.executeUserEntryPoint [as runMain]","line":81,"method":"executeUserEntryPoint [as runMain]","native":false},{"column":47,"file":"node:internal/main/run_main_module","function":null,"line":22,"method":null,"native":false}]}

superset_websocket | npm notice

superset_websocket | npm notice New major version of npm available! 8.19.2 -> 9.1.2

superset_websocket | npm notice Changelog: <https://github.com/npm/cli/releases/tag/v9.1.2>

superset_websocket | npm notice Run `npm install -g npm@9.1.2` to update!

superset_websocket | npm notice

superset_websocket exited with code 1

superset_tests_worker | 2022-11-17 00:10:06,416:ERROR:flask_appbuilder.security.sqla.manager:DB Creation and initialization failed: (psycopg2.OperationalError) could not connect to server: Connection refused

superset_tests_worker | Is the server running on host "localhost" (::1) and accepting

superset_tests_worker | TCP/IP connections on port 5432

superset_tests_worker | could not connect to server: Connection refused

superset_tests_worker | Is the server running on host "localhost" (127.0.0.1) and accepting

superset_tests_worker | TCP/IP connections on port 5432?superset_tests_worker |

superset_tests_worker | (Background on this error at: https://sqlalche.me/e/14/e3q8)

superset_tests_worker | Loaded your LOCAL configuration at [/app/docker/pythonpath_dev/superset_config.py]

superset_tests_worker exited with code 1Googled “superset_websocket fails docker compose config.json” and found a different issue, but it had the suggestion to do `docker-compose down` first, so I decided to try that, but I had the suspicion that something else was amiss. So I reverted to the non-dev compose but I wasn’t sure this would include the pydruid library.

docker compose down

docker compose -f docker-compose-non-dev.yml upI was wrong, the docker-compose-non-dev.yml worked and did provide access to the Druid database connection correctly. As it turns out the docker-compose.yml file uses other these additional pods in the deployment which the non dev version does not. Since I was not interested in development of Superset for now, I ended up using the non-dev version which worked well.

This one is just bad short term memory

But I forgot to remove the volumes again, which resulted in the postgres_db pod having trouble starting again. So I had to do the volume cleanup again… I also realized that the postgres version difference is between the two different docker compose files, and so I started back up:

docker compose down(delete volumes in docker dashboard)

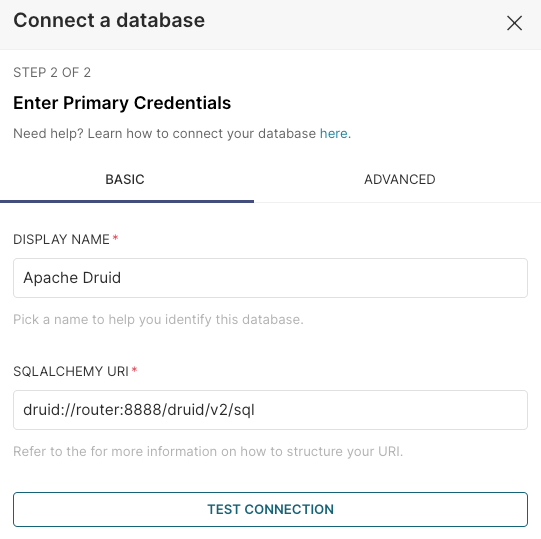

docker compose -f docker-compose-non-dev.yml upThis seemed to be working. And I could see the Superset UI. I added the connection to Druid.

But Murphy wasn’t done with me yet …

Could not establish the connection to Druid

What I tried was:

Test connection failed, I suspected a network issue between the two docker compose deployments, so I used:

docker network ls I could see which networks were set up on docker. There was “docker_default” which is the name for network in the Druid deployment. Docker compose creates a network called “<docker_compose_folder>_default” if you don’t specify one. <docker_compose_folder> is where the docker compose file lives, in the case of superset, this is why it was defaulting to a different network called “superset_default”. I read this.

So I updated the docker compose file for superset and set the networks section.

I appended this to the docker-compose-non-dev.yml for superset:

networks:

default:

name: docker_defaultand tried again

docker compose -f docker-compose-non-dev.yml upThat did it! The connection works now.

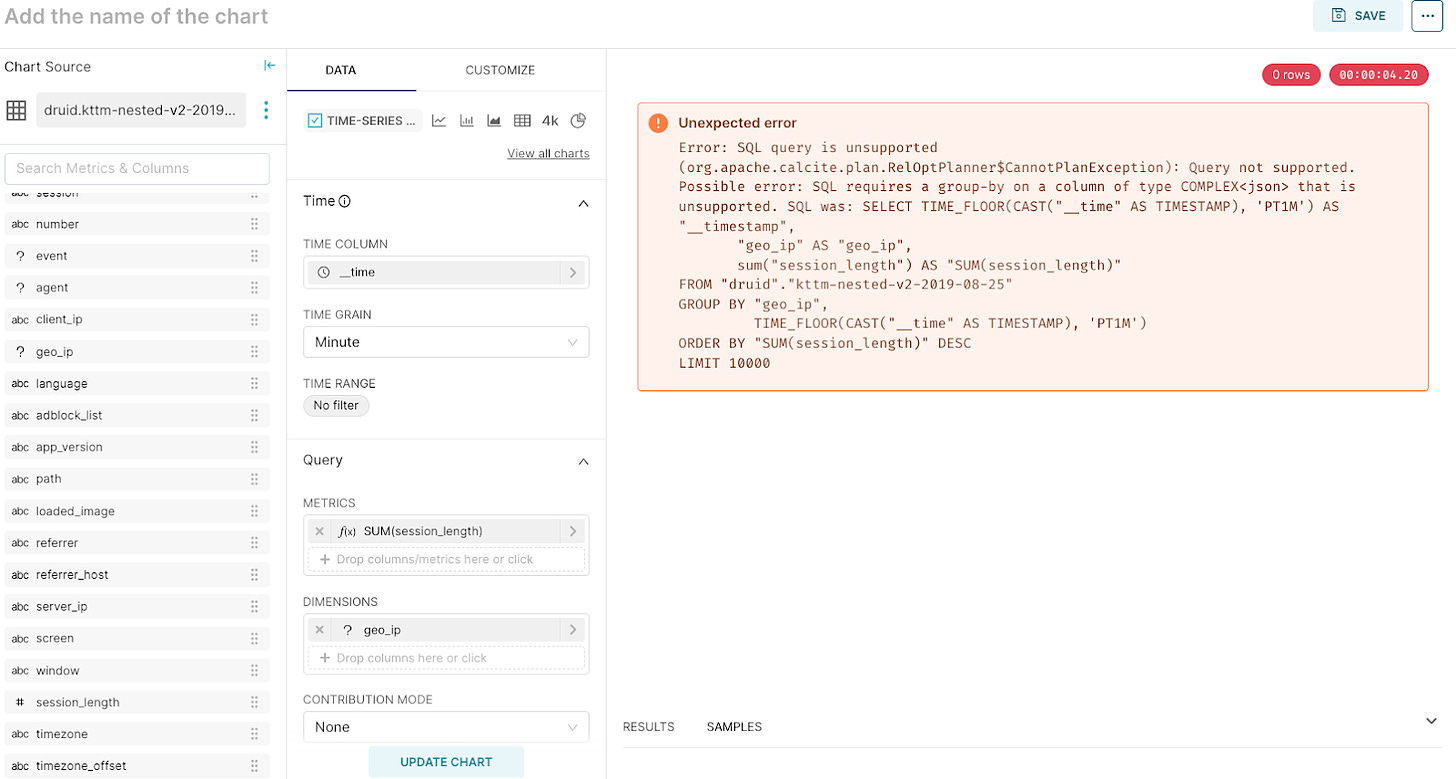

SQL Query is unsupported

After establishing the connection I tried creating my first time series chart and I ran into this:

I selected “geo_ip” as a dimension, which causes a problem because it is a JSON column, so even though I didn’t set out to use Nested JSON on Superset and I could just change it to a string column and be done, I kept going because I like a challenge. “How to setup a Superset chart when using nested JSON”, so here we go. I thought that if superset has the concept of a view, I could translate the JSON fields into columns using Druid’s JSON_VALUE functions.

I found that you can add a custom dataset in Superset as a SQL statement, from the + dropdown by selecting “SQL query”

I selected a few columns and metrics from both the JSON and regular druid columns:

SELECT "__time", "referrer", "session_length",

JSON_VALUE("geo_ip", '$.continent') AS continent,

JSON_VALUE("geo_ip", '$.country') AS country,

JSON_VALUE("geo_ip", '$.region') AS region,

JSON_VALUE("event", '$.type') AS event_type,

JSON_VALUE("event", '$.percentage') AS percent_clear,

JSON_VALUE("event", '$.layer') AS layer

FROM "druid"."kttm-nested-v2-2019-08-25"I clicked “SAVE” and named it “flattened_kttm_view”

Back to the chart, clicked “CREATE CHART” from the query window.

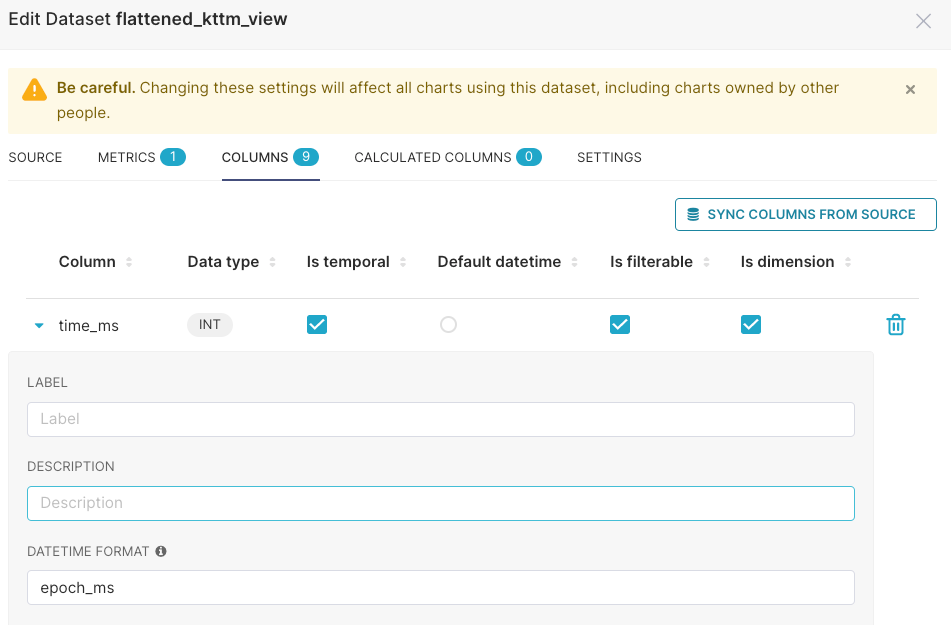

When I tried to use a time series visualization I couldn’t because the __time field was being identified as a string, instead of a timestamp. Murphy!

Tried casting it as a TIMESTAMP and also setting the time format on the chart view, edit dataset option to set the column info:

I tried :

SELECT CAST(__time AS BIGINT) "time_ms", "referrer", "session_length",

JSON_VALUE("geo_ip", '$.continent') AS continent,

JSON_VALUE("geo_ip", '$.country') AS country,

JSON_VALUE("geo_ip", '$.region') AS region,

JSON_VALUE("event", '$.type') AS event_type,

JSON_VALUE("event", '$.percentage') AS percent_clear,

JSON_VALUE("event", '$.layer') AS layer

FROM "druid"."kttm-nested-v2-2019-08-25" Given that I was using the long representation of time, I tried to use epoch_ms as the DATETIME format to see if it recognized it as a time field, but that wasn’t enough either.

I turns out that the key was to check the box for “Is temporal” for the time column

I could not select any metrics for the chart

In the Superset Create Chart view, none of fields from my query were selectable as metrics because they were all identified as strings. This was a side effect from using the JSON_VALUE function which returns to string data type by default. I changed the SQL for the metric columns coming from JSON with this final SQL:

SELECT __time, "referrer", "session_length",

JSON_VALUE("geo_ip", '$.continent') AS continent,

JSON_VALUE("geo_ip", '$.country') AS country,

JSON_VALUE("geo_ip", '$.region') AS region,

JSON_VALUE("event", '$.type') AS event_type,

JSON_VALUE("event", '$.percentage' RETURNING FLOAT) AS percent_clear,

JSON_VALUE("event", '$.layer' RETURNING FLOAT) AS layer

FROM "druid"."kttm-nested-v2-2019-08-25" I added “RETURNING FLOAT” to the JSON_VALUE functions that are for numeric values. Also notice that in this final SQL, I did not have to convert __time, I just had to set the “Is Temporal” flag in the dataset.

Conclusion

That’s all folks, these are the problems I ran into and how I solved them, if you run into any other problems while doing this, please leave a comment and we can expand this troubleshooting guide.